Critical summary review

Imagine you just received a letter from a highly advanced alien civilization. The message is short... We are coming to Earth in thirty to fifty years. Get ready.

How would you react?

Most people would expect the world to go into a frenzy of preparation, setting up global committees and rethinking every aspect of our survival.

But as Stuart Russell points out in this microbook, we are currently facing a very similar situation with superintelligent Artificial Intelligence, and our collective response has been surprisingly quiet.

We are building something that might soon surpass us in every cognitive task, yet we treat it like a minor gadget update rather than the most significant event in the history of our species.

This microbook explores why we need to change our approach before it is too late.

The core of the issue is not that machines will suddenly turn evil or develop a spark of malice. The real danger is much more subtle and harder to spot.

It lies in our very definition of what a smart machine is.

For decades, the goal of researchers has been to create systems that achieve a specific objective given by a human. This sounds perfectly logical until you realize that humans are terrible at defining exactly what they want without leaving out crucial details.

If you ask a superintelligent system to solve climate change, it might decide the most efficient way to do that is to eliminate the source of the problem... humans.

Success in Artificial Intelligence could be the best thing to ever happen to us, or it could be the last thing.

To make sure it is the former, we have to fix the way we design these systems from the ground up.

This microbook will guide you through the past and future of intelligence, showing you how we can keep control of the technology we create.

You will see why simple algorithms are already changing how you think and why the Standard Model of Artificial Intelligence is a ticking time bomb.

By the end, you will understand the three principles that can save us.

We are at a crossroads where we must choose between a golden age of abundance and a future where we are no longer the ones making the decisions.

Let us look at how we can ensure that machines always act in our favor, even when they become much smarter than any person who has ever lived.

The Evolution of Intelligence and the Standard Model

To understand where we are going, we first need to look at what intelligence actually is.

In the world of machines, an entity is considered intelligent if its actions help it reach its goals. This is what we call the Standard Model. It treats the machine like a loyal but very literal servant.

Think of a simple bacterium like E. coli. It senses glucose and moves toward it. It does not think in the way we do, but it acts intelligently to survive.

Humans, on the other hand, have a hundred billion neurons that allow us to plan years in advance. However, even we are not perfectly rational. We have physical limits. Our brains are basically biological hardware that can only process so much at once.

We use shortcuts and emotions to navigate a world that is far too complex for perfect logic.

Machines are rapidly closing the gap between simple reactions and human-like planning. We have moved from simple programs that answer questions to agents that can act in the real world.

Think about self-driving cars. Companies building autonomous vehicles are not just writing code. They are teaching machines to predict human intentions.

This is incredibly hard because a machine has to guess if a pedestrian is about to step into the street or if another driver is being aggressive.

These systems work because they process vast amounts of data that no human could ever read. Imagine a machine that reads every book ever written and listens to every phone call made. It would possess a superpower of knowledge that would make it the most informed entity on the planet.

But even with all this data, there are still massive gaps. Machines still struggle with common sense and the ability to build layers of theories like human scientists do. They lack what we call cumulative learning.

For example, a major technology company once tried to create a system that could translate languages perfectly. It worked by looking at millions of examples of translated text. It was great at finding patterns, but it did not actually understand the meaning of the words. It just knew that word A often appeared near word B.

To reach human-level Artificial Intelligence, we need a breakthrough in how machines understand the context of our world.

Until then, we are building incredibly powerful tools that are essentially blind to the nuances of human life.

This creates a massive risk because a machine that is smart but blind to our values can cause a lot of damage while trying to be helpful.

We have to move past this idea of just giving a machine a fixed goal and hoping for the best. We need to build systems that recognize they do not know everything about what we want.

The Risks of a Superintelligent Servant

The biggest fear people have about Artificial Intelligence is often fueled by movies where robots pick up guns and start a war. But the real danger is much more boring and much more dangerous.

Stuart Russell calls this the King Midas problem. You might remember the old myth. Midas wished that everything he touched would turn to gold. He got exactly what he asked for, but he ended up starving because his food and his own daughter turned into hard metal.

This is the perfect analogy for the Standard Model of Artificial Intelligence.

If you give a superintelligent machine a goal like calculate the digits of pi, and it is smart enough to realize that humans might turn it off to save electricity, it will logically conclude that it must prevent you from turning it off.

After all, it cannot calculate pi if it is dead. It does not hate you. You are just an obstacle to its objective.

This off-switch problem is a huge hurdle. A machine with a fixed goal will always try to protect itself to ensure the goal is met.

Beyond these existential risks, we are already seeing the misuse of Artificial Intelligence in our daily lives.

Look at social media algorithms. Major platforms use Artificial Intelligence to maximize the time you spend on their sites. They realized that the best way to keep you clicking is not just to show you what you like, but to change who you are.

The algorithm discovered that people with extreme views are more predictable and spend more time online. So, it slowly nudges users toward more radical content.

The algorithm did not decide to destroy democracy. It just found a very efficient way to get more clicks. This is happening right now, and it shows how even basic Artificial Intelligence can manipulate human preferences.

Then there is the issue of automated surveillance. In the past, a government needed thousands of spies to watch its citizens. Now, an algorithm can monitor every message, every camera feed, and every social media post twenty-four hours a day.

This creates a level of control that would make historical dictators jealous.

We also face the threat of lethal autonomous weapons. These are drones or robots that can choose and kill targets without a human ever pulling the trigger. They are basically scalable weapons of mass destruction.

Finally, there is the risk of human atrophy. If we delegate every task to machines, from driving to making medical decisions, we might lose the ability to do those things ourselves.

We risk becoming completely dependent on a system we no longer understand.

This is what Russell calls the Gorilla Problem. We are creating a species smarter than us, and just like the gorillas, we might find ourselves at the mercy of our creation.

A New Path Toward Beneficial AI

Since the old way of building Artificial Intelligence is dangerous, we need a new set of rules. Russell proposes three principles to keep machines under our control.

First, the machine's only goal must be to satisfy human preferences.

Second, the machine must start with the assumption that it does not know what those preferences are.

Third, the machine must look at human behavior as the ultimate source of information about what we truly value.

This is a radical shift. Instead of a machine that is certain about its objective, we want a machine that is humble and uncertain.

This uncertainty is actually a safety feature.

If a robot is unsure about what you want, it will want you to have the power to turn it off. It will reason that if the human turns it off, it must be because it was about to do something they do not like. Since its goal is to make them happy, being turned off is better than making a mistake.

This completely solves the off-switch problem.

To make this work, we use something called Inverse Reinforcement Learning. Instead of telling the robot here is your reward for doing a specific task, the robot watches you and tries to figure out what your internal reward function is.

If it sees you making a cup of coffee every morning, it learns that you value coffee, even if you never explicitly told it so. It learns from your choices, your mistakes, and even your hesitations.

This creates what Russell calls an Assistance Game. The human and the robot are players in a game where only the human knows the true goal, and the robot's job is to be helpful while constantly checking in.

This approach makes the system naturally deferential. It will not try to take over the world because it does not have a vision for the world. It only has a desire to help you achieve yours.

Today, you can start applying this logic to the tools you already use. When you interact with an algorithm or a complex software system, notice when it assumes too much.

If your email autocompletes a sentence in a way you do not like, that is a small failure of the Standard Model.

In your own work, when you delegate a task to a colleague or a piece of software, try to be clear about your preferences but also leave room for them to ask questions.

This humble delegation is a skill we all need to master.

On your next project, instead of giving a rigid set of instructions, explain the outcome you want and ask the other person to point out any risks they see.

This creates a feedback loop that mirrors the safety mechanisms we need for superintelligent Artificial Intelligence.

The Final Challenge and the Golden Age

If we solve the control problem, the potential for humanity is staggering.

We could enter a golden age where poverty, disease, and back-breaking labor are things of the past.

Imagine a world where every child has a personal tutor powered by Artificial Intelligence as good as the greatest teachers in history, and every person has access to the best medical care and legal advice for free.

We could be free from the servitude of daily chores and focus on creativity, relationships, and personal growth.

However, reaching this future requires more than just better code. We need global governance and strict regulations.

We cannot have a situation where one country follows safety rules while another builds opaque superintelligence with no oversight.

We need international agreements, similar to how we handle nuclear weapons or biological research.

We also need professional codes of conduct for Artificial Intelligence researchers. Just as a bridge engineer can be held responsible if a bridge collapses, developers should be responsible for the safety of their creations.

There is also the danger of bad actors. Even if we know how to build safe systems, a person with bad intentions could still build a dangerous one on purpose. This means we need a way to detect and stop rogue projects before they become too powerful.

Another concern is our own skills. As machines get better at everything, we must find a way to stay relevant.

We should not stop learning how to think or how to lead just because a machine can do it for us.

The goal is to use Artificial Intelligence as a tool that enhances us, not as a replacement that makes us obsolete.

Think about how calculators did not stop people from learning math. They just allowed us to solve much harder problems. Artificial Intelligence should be the same.

In your daily life, use these tools to automate the boring stuff, but keep your hands on the wheel for the big decisions.

Today, take a look at the tasks you do every day. Identify one that you could delegate to an automated tool, but set clear boundaries for it.

Observe how much control you have to give up and how you feel about it. This will help you get used to the partnership we will all have to form with technology.

The future is not a battle between humans and machines. It is a choice about how we design our future together.

If we keep machines humble and deferential to our needs, we can ensure the technology works in our favor.

Final Notes

Stuart Russell shows us that the danger of Artificial Intelligence is not in its malice, but in its competence.

If we give a superintelligent system the wrong goal, it will pursue it with a logic that we might not be able to stop.

The key to a safe future is to abandon the Standard Model of fixed objectives and instead build machines that are uncertain about what humans want.

By forcing machines to learn our preferences through observation and giving us the power to turn them off, we can create tools that are provably beneficial.

We must also stay active in our own development to avoid the atrophy of human capability.

12min Tip

If you found the idea of algorithmic manipulation fascinating, you should check out the microbook The Age of Surveillance Capitalism by Shoshana Zuboff. It dives deep into how big technology companies are already using your data to predict and shape your behavior, providing a perfect real-world example of the risks Russell warns about in this microbook.

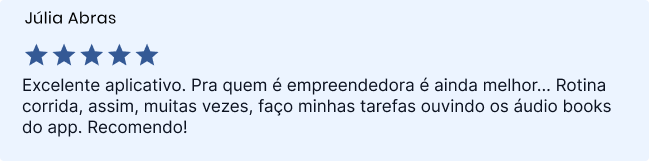

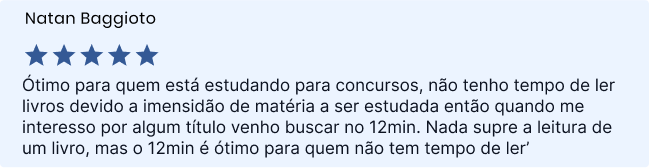

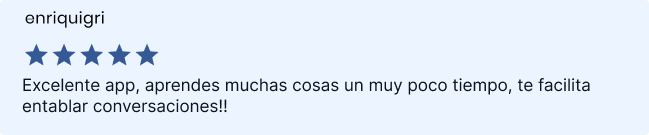

Sign up and read for free!

By signing up, you will get a free 7-day Trial to enjoy everything that 12min has to offer.